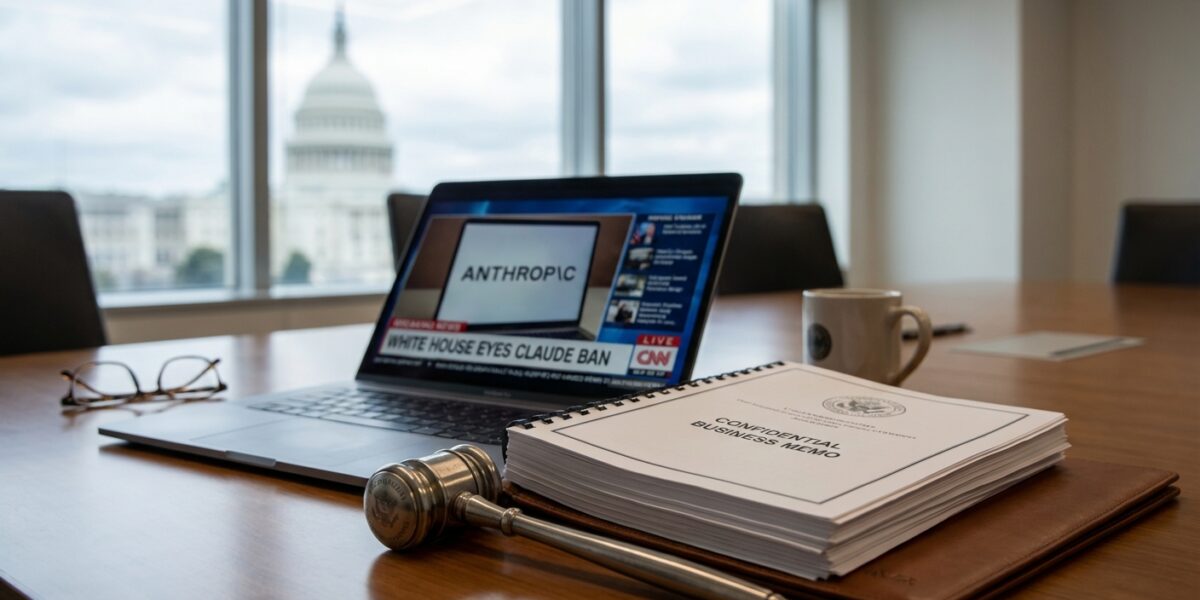

If you’re running a business that relies on AI tools — and in 2026, most of us are — the White House Anthropic executive order showdown should have your full attention. Not because you’re a federal agency, but because what’s happening right now is a live preview of how government policy on AI tools can blow up your entire stack with almost no warning.

Here’s the short version: President Trump announced on February 27, 2026, that he was ordering all federal agencies to immediately stop using Anthropic’s technology. Defense Secretary Pete Hegseth designated Anthropic as a supply chain risk — a label that’s normally reserved for companies tied to foreign adversaries, not AI safety startups in San Francisco. And as of mid-March 2026, the administration is moving toward a formal executive order to pull Claude from all executive branch agencies.

Anthropic fired back with a lawsuit in California, arguing the administration was illegally retaliating against speech protected by the First Amendment.

“There is no reason to transform this situation into an all-or-nothing moment.”

— Four U.S. Senators, in a letter to the Trump administration regarding the Anthropic dispute

The company publicly stated that its federal contracts are already being canceled — and that private sector contracts are also in doubt, putting hundreds of millions of dollars at risk.

This isn’t just Washington drama. It’s a real-world case study in vendor risk, AI governance, and what happens when the tools you’ve built your workflows around become politically radioactive. Let me break down what’s actually going on and what it means for your business.

Historically, the supply chain risk designation under the Anthropic Trump dispute has been applied to companies with documented ties to foreign adversaries — think Huawei, ZTE, or vendors with known connections to Chinese or Russian state actors. Slapping that label on a San Francisco-based AI safety company founded by former OpenAI researchers is a significant escalation. It’s a signal that this particular tool is being stretched well beyond its original purpose.

Why does this matter for your business? Because supply chain risk designations ripple downstream fast. If you’re a government contractor — or even a vendor to a government contractor — using Claude in your workflows could theoretically create compliance exposure. That’s not speculation. That’s just how these designations work in practice, and the white house anthropic executive order situation makes it more urgent to pay attention.

And even if you’re a fully private business with zero government contracts, this label shapes perception. Procurement teams at large enterprises are already asking which AI vendors are “safe” to build on. A federal supply chain risk designation — even a politically motivated one tied to the broader anthropic trump conflict — creates fear, uncertainty, and doubt that travels fast through buying committees.

What Anthropic’s Lawsuit Actually Argues (And Why It Matters for AI Tools Government Policy)

Anthropic’s legal strategy is worth understanding because it reframes the entire dispute in constitutional terms. The company is arguing that the administration’s actions amount to illegal retaliation for protected speech — specifically, Anthropic’s public positions on AI safety and its refusal to abandon ethical guardrails under government pressure.

This is a First Amendment argument applied to a corporate AI vendor. It’s novel, and it matters. If Anthropic wins, it sets a precedent that the government can’t use its buying power to coerce AI companies into dropping safety policies. If it loses — or if the white house anthropic executive order moves forward before courts can intervene — it signals that federal AI procurement can be weaponized as a direct policy tool against private companies.

“Serious concerns about whether national security decisions are being driven by careful analysis or political considerations.”

— Senator Mark Warner, Vice Chair of the Senate Intelligence Committee, responding to the Trump administration’s directive against Anthropic, March 2026

Senator Warner’s concern points to something real: when political dynamics start driving technology buying decisions at the federal level, the collateral damage doesn’t stay in Washington. The private sector watches these signals and adjusts its own risk tolerance accordingly. The claude ban federal government situation is already doing exactly that.

For businesses evaluating AI vendors right now, this lawsuit is a live experiment in vendor stability under political pressure. Regardless of where you stand on the ethics debate, a company fighting the federal government in court while its contracts are being canceled is a company whose near-term trajectory is genuinely uncertain. That uncertainty is a business risk — full stop.

Three Practical Implications for Businesses Using AI Tools in 2026

I’ve been building AI-assisted workflows into my marketing practice for a couple of years now. I use Claude regularly — I’ve written about it here before, including pieces on Claude Code features and parallel AI agent workflows. So I’m not writing this from the outside looking in. The white house anthropic executive order and the broader ai tools government policy 2026 landscape are things I’m actively thinking through for my own work. Here’s where my head is at.

1. Vendor concen